What does it really take to understand whether mentoring changes young people’s lives?

At the Centre for Public Impact, we set out to answer this question — and what we found transformed how we think about evaluation itself.

“We are constantly finding ways to measure what we do, but we are nowhere near. It’s a hard thing to do—being a theorist and a practising mentor… we need help to find new ways that capture the holistic impact.”

— Mentor and Music Educator, HMDT Music

Why we took a different approach

In 2024, the Greater London Authority (GLA) commissioned us to evaluate the Mayor’s New Deal for Young People (NDYP) – a £34 million investment to improve the quality, reach, and sustainability of youth mentoring across London.

The programme brings together a wide network of organisations supporting young Londoners facing significant barriers to education, employment, and opportunity.

To understand its impact, we had to look beyond delivery metrics and ask:

- How is mentoring actually experienced by young people and their mentors?

- How do organisations sustain this work within tight funding cycles?

- How does the wider system shape what mentoring can achieve?

To answer these questions, we adopted a transformative evaluation approach – centring lived experience through qualitative and participatory methods such as storytelling, journey mapping, and peer-led research.

Rather than analysing from the outside, we created space for young people, organisations, and GLA colleagues to reflect on their work together.

Letting those closest to the work define impact

Mentoring is not simply a service. It is a network of relationships that can shape how young people see themselves and their futures.

This matters for how we evaluate. Traditional metrics can tell you whether targets were met, but they cannot tell you why a young person finally trusted an adult enough to ask for help, or what it meant to them when someone showed up consistently. That kind of impact is real, and it matters.

So instead of asking whether mentoring ‘worked’, we asked:

‘How is it experienced — by different young people, in different contexts, and at different points in their journeys?’

This shift revealed differences in access, trust, and perceived value that would otherwise remain hidden. It also challenged our assumptions about what success looks like.

When people closest to the work define impact, the picture becomes richer and more honest — capturing confidence, relationships, with the systems around them and a growing sense of possibility, not just attendance or outcomes.

Building the infrastructure for participatory evaluation

If evaluation starts with lived experience, the process itself must change.

Working with peer researchers brought this into focus. Many young people balanced the project alongside studies and other commitments. Sustaining engagement required:

- Flexible timelines

- One-to-one support

- Treating participation as optional, not expected

It also highlighted how much of participatory evaluation is often invisible. Behind the scenes, this work includes:

- Upskilling peer researchers

- Maintaining consistent points of contact

- Adapting workshops

- Navigating safeguarding and consent

- Coordinating across organisations

This labour is rarely recognised, yet it is essential to making participatory processes work.

Supporting organisations to engage sustainably

The same applies to delivery organisations.

While many were enthusiastic about participatory approaches, they also face real constraints: tight funding cycles, limited capacity, and the constant demands of day-to-day delivery.

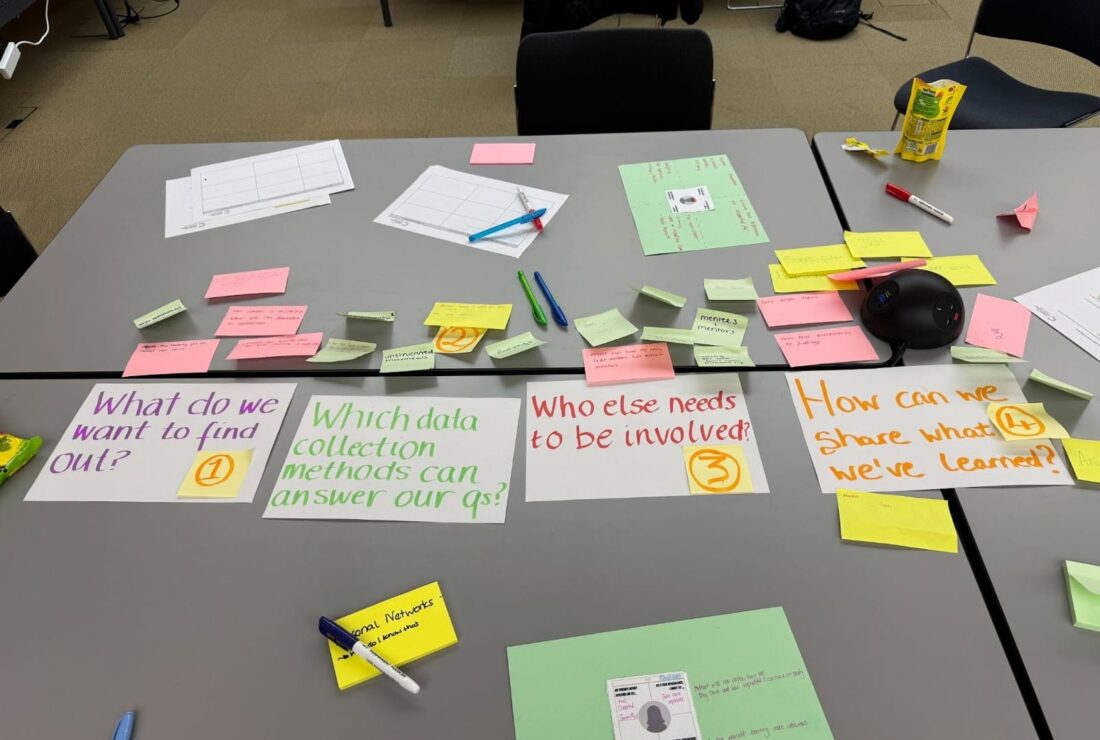

This became clear during a co-design session testing the Storytelling Toolkit for Impact Evaluation. The conversation quickly shifted from concepts to practicalities:

- How would these fit within existing programmes?

- What kind of facilitation do they require?

- How could insights feed into learning without adding burden?

Participatory approaches cannot simply be layered on top of existing work, they must be designed to fit alongside the day-to-day work organisations are already doing.

Rethinking the ‘evaluators’ role

This approach also challenged our own role at CPI. Rather than acting as external partners, we convened conversations, held space for reflection, and surfaced tensions that might otherwise remain implicit. In practice, this often meant navigating trade-offs between the pace of participatory processes and the timelines of programme delivery and reporting.

We came to see these tensions not as failures, but as evidence of the complexity of doing this work well. Transformative evaluation asks us to acknowledge that:

- Meaningful participation takes time

- Relationships require care

- Lived experience does not always fit neatly into traditional reporting cycles

“I learned that listening to someone else’s story can open up your eyes to the world and see how others feel.”

– Peer Researcher

Why it makes a difference

For the NDYP, this approach enabled an evaluation that was:

- Responsive to complexity

- Attentive to equity

- Genuinely useful for practice, policy, and commissioning

But more than that: the process itself created value.

It was not always straightforward, but that was precisely the point. By centring the voices of young people and practitioners, the findings carry credibility that more extractive approaches lack.

The evaluation also became an opportunity for growth for everyone:

- Peer researchers developing new skills

- Organisations shared best practices.

- Participants reflected more deeply on their work

It is a reminder that evaluation does not have to be a one-way transaction. Done well, it gives something back to the practitioners doing the work, to the young people whose experiences shape it, and to the wider sector learning from it.

If this approach resonates with you, explore the resources from this work — including the final report, storytelling toolkit, and peer research insights — to support your own practice.

We’re continuing to develop and apply transformative approaches to evaluation across different contexts. If you’re interested in collaborating or rethinking how impact is understood and measured in your work, we’d love to hear from you.